The Economist recently described the emerging problem of “gigantism” by which it means increasingly massive compute, as “The bigger is better approach to AI is running out of road“ (article behind a paywall). It quotes research firm Epoch AI who in 2022 (pre-ChatGPT) noted that “the computing power necessary to train a cutting-edge model was doubling every six to ten months.”

The article suggests various solutions to the gigantism problem, some that adjust models (parameter tuning, “fuzzier maths” e.g. fewer decimal places, rounding) while others focus on implementation of the models. Specifically the article highlights the greats and the pitfalls of what is currently the world’s premier programming language, Python:

“A great deal of AI programming is done in a language called Python. It is designed to be easy to use, freeing coders from the need to think about exactly how their programs will behave on the chips that run them. The price of abstracting such details away is slow code. Paying more attention to these implementation details can bring big benefits.” This, according to Thomas Wolf, chief science officer of Hugging Face, is “a huge part of the game at the moment.”

Time to get that Python diet started, slim it down for the modern age. We at KX and our partner, Treliant, are doing our bit.

KX recently open-sourced key portions of PyKX, allowing developers access to easily integrate with kdb vector programming, the kdb+ database, and fast time series data analytics. PyKX combines the flexibility of working within Python and q, the language that underpins kdb, in a seamless manner, through efficient management of in-memory objects. This offers one way to bring speed-up and compute savings to slimline your Python apps. It also democratizes kdb, meaning it is immediately accessible with no learning curve for the (many) Python users to access the 80x speed-ups and smaller computes for kdb vector and time series processing.

For those looking to identify projects, capital markets system integrator partner of KX, Treliant, Treliant just produced a short “PyKX boosts Trade Analytics” example. In it, they demonstrate “the versatility of PyKX, and how it opens up the performance and vector based analytical capabilities for a time series data set.”

With their example, the authors set up a PyKX object and query it from Python. Then, using Interprocess Communications (IPC), they pull data for querying, demonstrating 20x more performance than Pandas for “calculating max, min and average price per symbol on a 10m row dataset loaded into memory.”

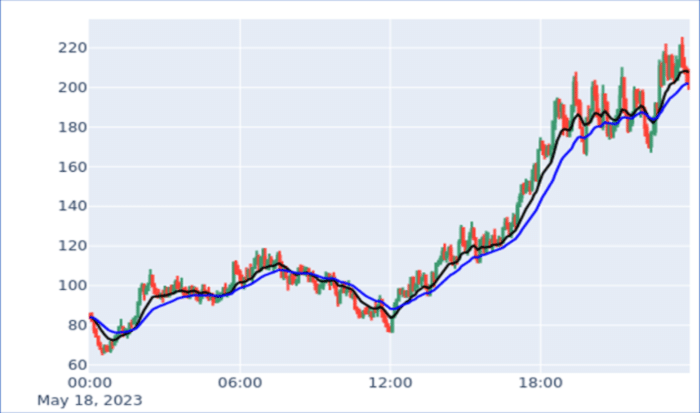

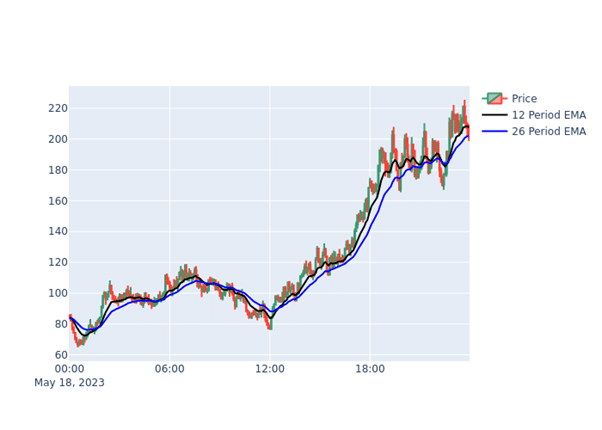

Then, by running powerful q functions within Python for MACD (moving average convergence/divergence), the authors show how to expose the macd functions to fetch and store data locally as a PyKX object. By converting the PyKX object to a Pandas dataframe, they show how to visualize a candlestick chart using the Python plotly library, like so:

Consequently, PyKX helps “streamline and optimize interactions between the technologies” providing fast code and performant compute to the fast development (with slower code). This is what makes Python so straightforward.

Download PyKX from PyPI

Licensed Features included with the Personal Edition of kdb Insights