If your job is to find something and find it quickly, you expect that should be your focus. Yet for many quants in their search for alpha signals, arbitrage opportunities, and better execution, they seem to spend more time looking for solutions to technical challenges around data than uncovering the valuable business opportunities hidden within it.

It’s all there in the data, they know that. But pulling it together from multiple sources, in its different formats and varying quality, poses dull and repetitive but unavoidable technical challenges that delay time to market while competitors are busy getting there first. And it’s not just time to market; it’s execution time too, particularly in environments where windows of opportunity are fleeting and being first matters.

The underlying problem is that data is hard to manage. It comes in high volumes – very high volumes – in bursts, duplicated, with gaps and varying data quality. Naming conventions and formats may vary by source. One challenge is capturing, validating, and normalizing those different data streams individually. Another is to join them together into a coherent data set and extract insights. But the biggest challenge is to simultaneously capture, normalize, validate, analyze and produce insights not only from huge volumes of historical data but overlaying updates in real-time. That’s where an integrated streaming analytics platform enables you to provide traders and algorithms with the latest up to the second insights into enormous data sets like trading data.

The Engine

A streaming analytics engine enables the delivery of fast solutions in less time. It is achieved by removing the data management burden from quants and data scientists to allow them to focus on the development of data-centric trading practices that are increasingly essential in competitive, fast-moving markets. By enabling them to create innovative new applications and strategies more easily, the business benefit is better trading outcomes and enhanced execution quality for dealers and their customers.

To that end, the KX streaming analytics platform is based on three design principles

- Built for the modern data ecosystem

- Unified for richer analytics at scale and speed

- Enabling faster ROI

In building for the modern data ecosystem, it recognizes that data organizations of the future will not be based around continually expanding data lakes. Instead, they will be organized around layers of data fabrics that enable any person in any part of an organization to access data, in any format and from any system, where they can manipulate it in real-time using the tools and techniques they are already skilled in. To do that requires a combination of integration and interoperability across languages (Python, SQL) and technologies (Kafka, Postgres) to operate on data wherever it may reside – in the cloud, on-prem, or on the edge. Achieving it can deliver the power of data science across the enterprise.

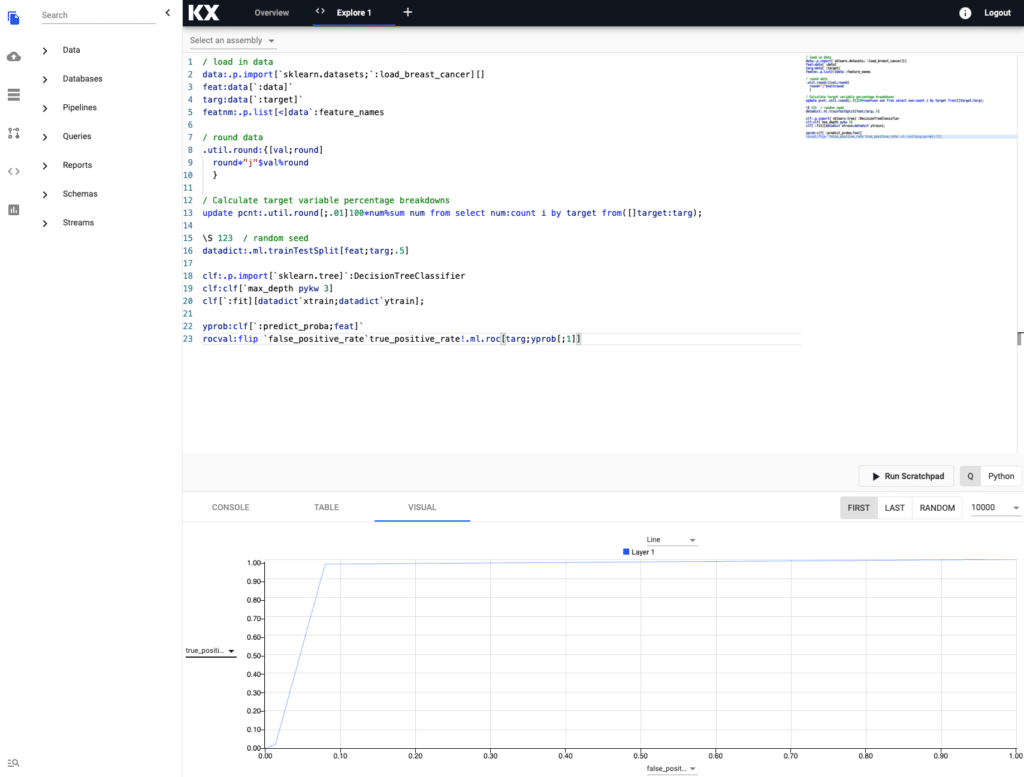

Scratchpad and analytic in kdb Insights

To gain that widespread adoption, it must be easy to access and use. Providing the ability to ingest, manage, analyze, enrich, and visualize all your data – both real-time and historical – on one unified platform is the starting point. It not only simplifies the deployment and operation of the solution but also enables users to combine the context of historical data with the immediacy of real-time events in their analytics and strategies. Independent, isolated, sandboxed backtesting capabilities within the same environment improve quality control by emulating production-level data and processing challenges. Having it all underpinned by widely adopted cloud-based technologies like Kubernetes provides the on-demand scalability necessary in volatile markets.

KX is renowned for its execution speed, being independently benchmarked by STAC as the highest-performing real-time streaming analytics solution. kdb Insights enables speed to those results by providing a self-service environment for quants to independently create, deploy and share data pipelines that define the ingestion, transformation, analytics, ML models, and storage workflow. The result is a faster ROI and ongoing flexibility to respond quickly to new opportunities and market events as they arise.

The Value Provided

A streaming analytics platform enables organizations to deploy real-time data-driven solutions easier and faster by providing curated data and tools that enable quants to build better pricing, execution, and hedging strategies to deliver superior trading performance.

A unified scalable data store addresses the problem of data fragmentation and provides instant, low latency access to high-granularity real-time and historical data across the organization. It enables them to replace the delayed insight of batch-based analysis with instantaneous responses to events.

Support for familiar tools and data-science languages like SQL and Python enables developers to continue to use existing skills and libraries in conjunction with the native time-series functionality of KX. Moreover, having them integrated within the platform means models can natively run within kdb Insights, thereby improving performance, eliminating costly data movement, reducing latency, and avoiding duplication. The additional event processing capabilities enable joining across data streams, embedding of models to respond to events instantly, and removing the inefficiency and latency of polling or running repeated queries.

Underneath it all, the data management platform takes care of the data challenges of managing the data lifecycle from ingestion to storage, including validation, normalization, naming, archiving, and exception management. The net result for Quants is achieving that earlier mentioned ability to focus on business challenges and solve them faster by analyzing more data, more easily and more efficiently. The words of a customer say it best:

“As consumers, we can avoid 99% of the overhead of worrying about data implementation and focus solely on solving the business problems at hand.”

For more information on KX Insights please visit our website, www.kx.com, or contact us on kxsales@kx.com